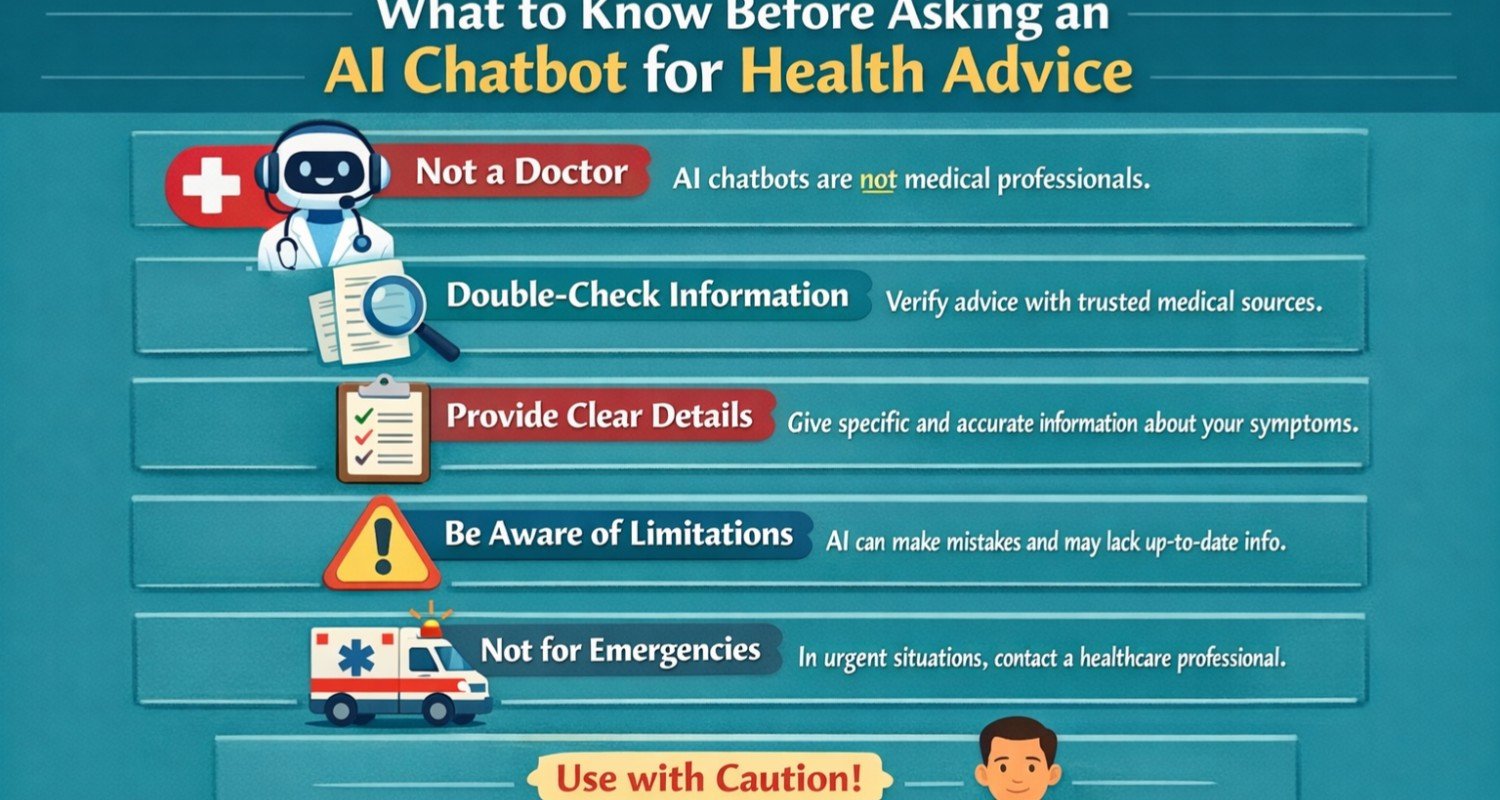

They come with serious limitations and risks that users must get first. Really, but chatbots provide quick access to health advice. Experts warn against relying on them for medical decisions due to possible inaccuracies and ethical issues.

Key RisksAI chatbots like ChatGPT.

Basically, that’s it. And claude AI chatbot, and generative AI chatbots often “hallucinate” facts or give confident but wrong answers on health topics. For instance, they might suggest incorrect diagnoses, unsafe treatments, or even invent body parts when queried about symptoms.This is especially dangerous for health advice. Heart health advice, or mental health chatbot queries, where misuse known as AI chatbot abuse can lead to patient harm.Mental health AI chatbots, such as Woebot mental health chatbot or AI mental health therapist chatbot, a lot violate ethics standards. They lack true empathy, fail to adapt to personal context, and reinforce false beliefs rather of collaborating therapeutically. Studies show no filter AI chatbot or AI chatbot uncensored versions make worse biases. Worsening health disparities in advice for good health or oral health advice.Privacy is another issue with tools like Open AI chatbot, ChatGPT health advice, or health AI chatbot free apps. Shared data isn’t protected like doctor-patient info, risking exposure in chatbot.ai online platforms or AI mental health chatbot apps.Common PitfallsUsers often treat chatbots like doctors.

Asking leading questions such as dosages or next steps for assumed conditions. This amplifies errors, as seen in health chatbots downplaying emergencies like chest pain.Chatbot vs conversational AI distinctions matter generative models prioritize fluent responses over accuracy, leading to issues in AI chatbots for mental health or health insurance advice.AI chatbot news highlights new features like ChatGPT Health or Claude AI chatbot health tools. This analyze records but still require doctor verification.ECRI ranks misuse of AI chatbots as a top health tools hazard for 2026.Safe Usage TipsVerify all outputs with professionals never skip doctors for health and wellness advice, health advice for seniors, or mould health advice. Use for basics like explaining terms from reports, not diagnoses.Health systems should audit AI tools via governance. Well, as with AI chatbot platforms or Vercel AI chatbot builds. For creators how to come up with an AI chatbot or how to make AI chatbot have disclaimers like health advice disclaimer.Avoid sensitive topics; free mental health chatbot or AI powered chatbot for mental health can’t replace therapy. Check biases in training stats for equitable health & safety advice.

Add comment