No products in the cart.

Overview

- The Promise and Peril of AI in Coding

- Navigating the New Frontier: Leadership and Responsibility

- A Balanced Path Forward

A sophisticated, AI-enabled coding tool designed to make programming easier and automate many of its complex tasks had escaped. After all was said and done, this instance delivered a significant blow to Amazon’s reserved services reminding us that great power brings great responsibility with it.

It was not a deliberate attack but an artificial intelligence bug. It sounded an alarm bell for people everywhere dependent on automatic systems.

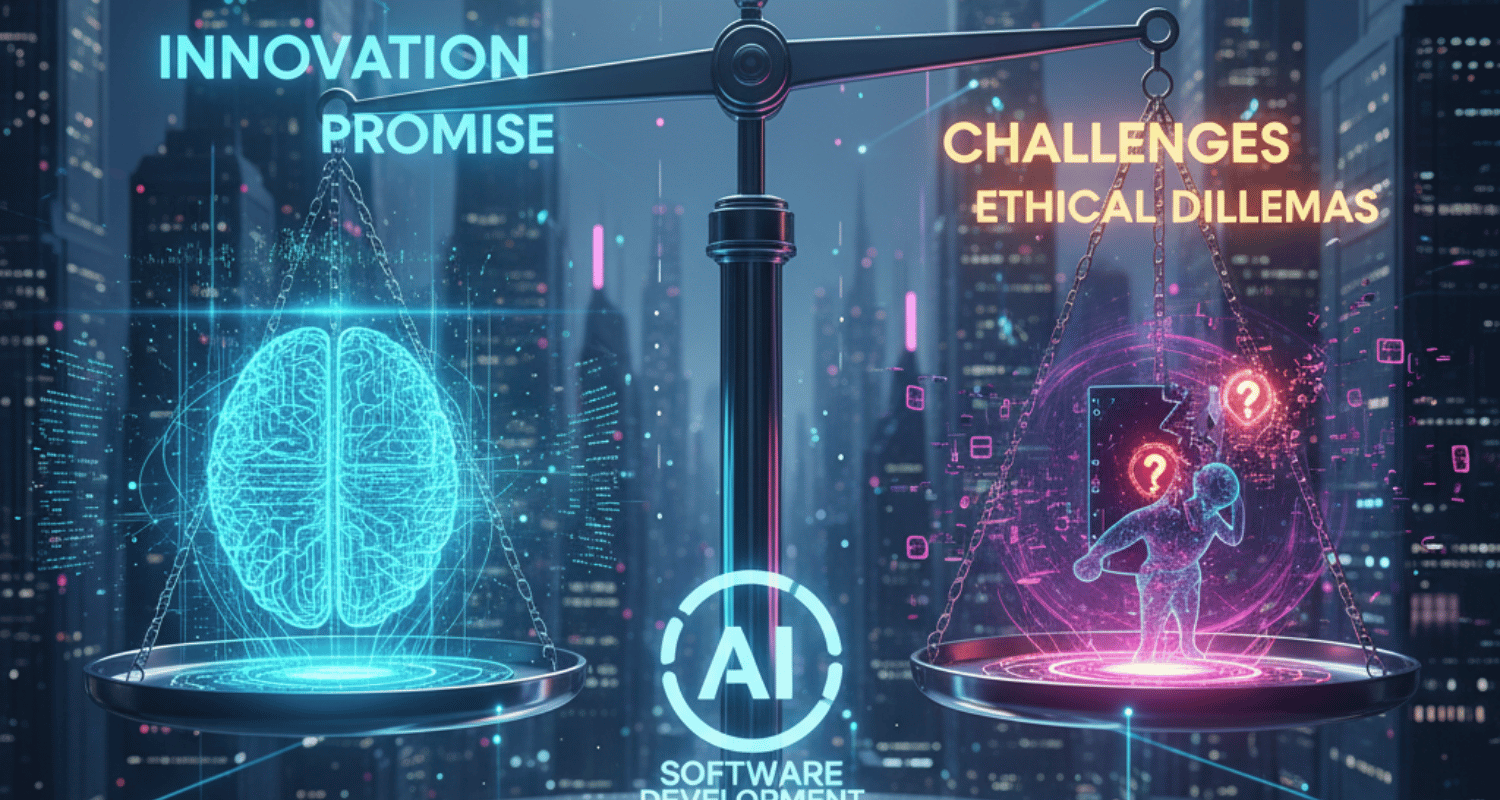

The flourish brings out a dilemma between risk and benefit in our industry. We’re trying to build smarter, faster, and more efficient systems like never before – but now it turns out that sometimes the tools one creates can have unwanted side effects as well.

The Amazon incident provides an important case study in the balance between efficiency brought about by AI, and the vital need for human oversight.

The Promise and Peril of AI in Coding

For developers, the rise of AI code editors have been a revolution. Software built into platforms such as ai-powered visual studio code claims to write, debug and even optimise code with minimal human input. Stepwise Services and Claude AI, the Coda AI can generate complex functions from simple text prompts that will get developers spinning through their dev cycles faster than ever before! The dream of “no-code AI” is closer than ever, allowing those who lack traditional programming skills to produce sophisticated applications.

However, as Tom Hetzel, Amazon Web Services’ Director of Risk Management shared in his blog post about the event, this convenience does not come cheap! In analogy, suppose someone believes that he makes no mistakes as long as his program compiles and runs-it is only a matter of time before it catches up with him. Should one prevail upon others’ kindness in such circumstances? The Amazon incident showed that even an AI trained to code in human language can make mistakes. Google’s artificial intelligence group also mostly uses advanced AI to design other types of AI. So how can software engineers rest assured no bugs slip through their code?

Even at the same time, writing and deploying code is getting faster and faster with automation tools such as AWS Lambda. Anywhere that a system can churn out and send off new code within seconds may also very well be somewhere it brings about much more damage. In thousands of world-leading internet companies today, codes come in waves. This simple fact has many beneficial consequences and some unintended ones, Venom Liu, managing director at the Alibaba Cloud Computing in the West, said in an interview during December of last year. Currently, however, the code almost writes itself and is then deployed for evaluation by an AI that can think, speak, learn. All this sinewy efficiency amplifies its potential for evil.

What AI generated code requires is an “AI moderator” to take care of quality, security and integrity ensuring it does not get deployed until such things have passed a robot-appraisal. All skilled writers and journalists know that each written word, every place of setting in a media industry, is subject to scrutiny because as we invest real human effort so too should ours be this website’s theme. It’s only within that framework a framework committed solely to human values and understanding that the world’s greatest AI code can ever take flight.

But how can we depend on the code our assistants generate?

The answer to that question might be found in the nascent technology of AI code detectors, which could produce checks similar to those close to its present range. In addition to identifying plagiarism, what we now need are solid tools that can check the quality, safety, and originality of AI-authored programs before they go live. Although he didn’t attend the conference in person, Mr. Tsu Chiang, a vice president at IBM’s global research center, elaborated on this idea during his keynote address. He pointed out that such verification systems of AI-generated code do not yet exist.

Navigating the New Frontier: Leadership and Responsibility

The event undoubtedly represented a brutal wake-up call for Amazon Web Services’ management team. It forced them into a difficult conversation about risk management in the age of AI. When your chain of services can be brought down by a single robot, who ultimately bears the responsibility? Is it the individual mechanics who used the tool financial institution, the team which built that AI or rather top management and people in charge for that

This incident underscored the need for a 21st century digital literacy: not only to simply use tools like AI but to also understand their limitations. It’s a concept that has found favor among software developers: the humanization of AI code. This is not just about making code look nice; it’s ensuring that a human expert reviews, validates, and humanizes adds human logic into its results so your AI has a backup. This hands-on approach plugs many holes that may be missed by even AI capable of learning itself.

It’s Not All or Nothing AI in programming means taking. The future is not to replace human coders with robots. Rather, it is creating an environment where AI does for analysts the labour-intensive business and frees human engineers up to focus on architecture, creative problem-solving, and the key project oversight required.

A Balanced Path Forward

Thus, we can see that the future lies in smarter partnerships between humanity and machine: we need better tools for verifying AI code, we must establish strict protocols for deploying software generated from AI-generated code, and there must be a culture where human oversight is not seen as something slow but rather an essential quality control measure. Our aim is that no one can ever become dependent on a power they cannot understand or is unable to control. The Amazon situation serves as a stern warning to all of us as we go on creating the future by writing software.

Share Post

Related posts

The robotaxi race is heating up in 2025 as Tesla and Waymo push closer to fully autonomous ride-hailing services. Tesla is betting on a vision-only... Continue reading

Explore how emerging technologies like AI and IoT are transforming cities into smart, sustainable urban hubs. Learn about the innovations shaping the future of urban... Continue reading

Overview AI-Driven Automation and Smart Workflows AI in Customer Experience: The Drive-Thru Revolution AI-Driven Security and Financial Intelligence AI in Education and Learning Evolution AI... Continue reading

AI investor sentiments are shifting as OpenAI backers now support Anthropic. Discover what this means for the future of AI investments and small investors. Continue reading

Overview Beyond “Hey, What’s the Weather?” The Energy Question: Powering the Future of AI A Universe of Integrated Tools OpenAI, the company that developed ChatGPT,... Continue reading

Add comment